Building real time data pipelines with Apache Kafka and Apache Samza.

Apache Kafka® is a distributed streaming platform. What exactly does that mean?

We think of a streaming platform as having three key capabilities:

- It lets you publish and subscribe to streams of records. In this respect it is similar to a message queue or enterprise messaging system.

- It lets you store streams of records in a fault-tolerant way.

- It lets you process streams of records as they occur.

What is Kafka good for?

It gets used for two broad classes of application:

- Building real-time streaming data pipelines that reliably get data between systems or applications

- Building real-time streaming applications that transform or react to the streams of data

To understand how Kafka does these things, let's dive in and explore Kafka's capabilities from the bottom up.

First a few concepts:

- Kafka is run as a cluster on one or more servers.

- The Kafka cluster stores streams of records in categories called topics.

- Each record consists of a key, a value, and a timestamp.

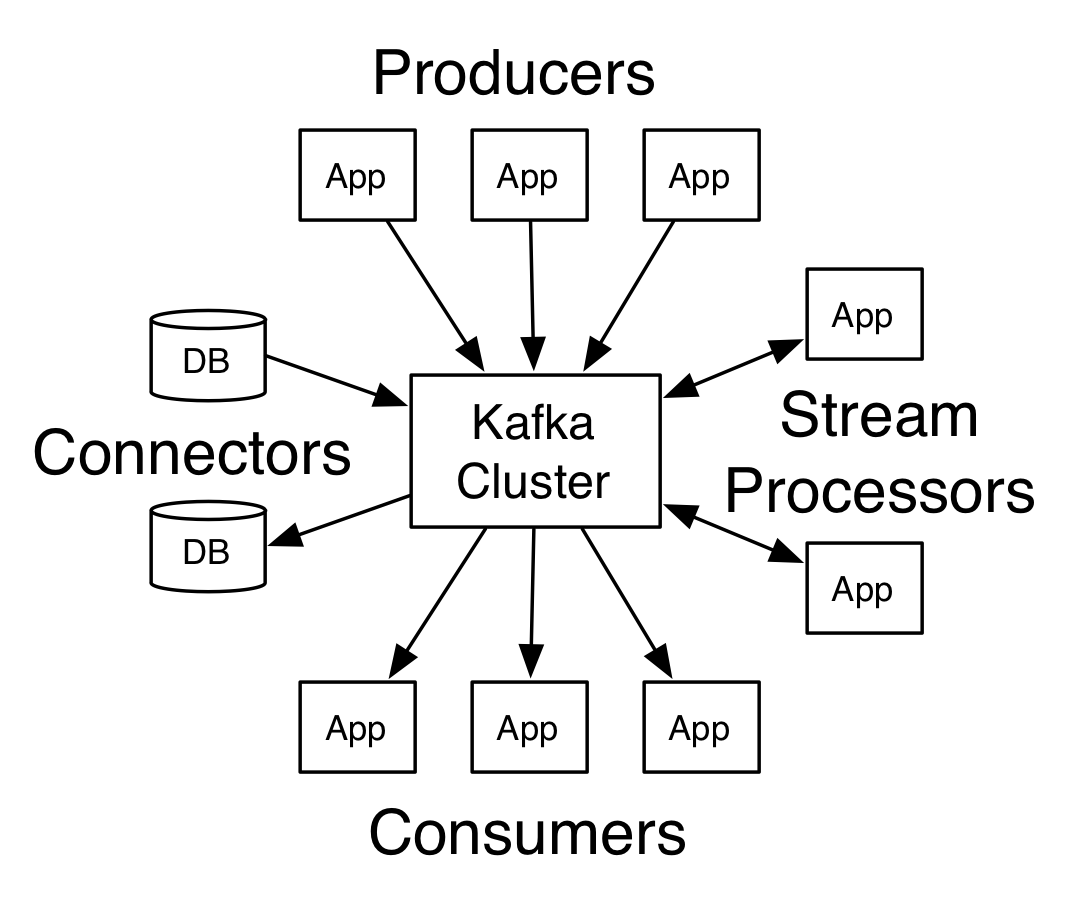

Kafka has four core APIs:

In Kafka the communication between the clients and the servers is done with a simple, high-performance, language agnostic TCP protocol. This protocol is versioned and maintains backwards compatibility with older version. We provide a Java client for Kafka, but clients are available in many languages.

Topics and Logs

Let's first dive into the core abstraction Kafka provides for a stream of records—the topic.

A topic is a category or feed name to which records are published. Topics in Kafka are always multi-subscriber; that is, a topic can have zero, one, or many consumers that subscribe to the data written to it.

Comments

Post a Comment